| "The future is the worst thing about the present." |

|

|

| Gustave Flaubert (1821-1880), French novelist |

|

|

|

|

|

|

|

|

|

|

|

Monday June 1, 2026

Twenty-six Years Online!

The Phrenicea website, a scenario of the future, is celebrating a Quarter Century plus One!

Online since April 2000, it’s tediously hand coded in HTML (Hypertext Markup Language), the web programming language before the advent of convenient website builders like Wix and GoDaddy.

Consequently, it retains the look of websites from that early time period. It was designed for the computer screen, as it preceded smartphones.

After 26 years, it's now less a vision of the future and more a snapshot of the past!

Still true nevertheless is a Phrenicea catchphrase, “The Future—It’s All in Your Head!”

posted by John Herman 8:12 AM

Wednesday October 1, 2025

A Quarter Century Online!

The Phrenicea website, a scenario of the future, is celebrating a Quarter Century!

Online since April 2000, it’s tediously hand coded in HTML (Hypertext Markup Language), the web programming language before the advent of convenient website builders like Wix and GoDaddy.

Consequently, it retains the look of websites from that early time period. It was designed for the computer screen, as it preceded smartphones.

After 25 years, it's now less a vision of the future and more a snapshot of the past!

Still true nevertheless is a Phrenicea catchphrase, “The Future—It’s All in Your Head!”

posted by John Herman 7:23 AM

Saturday February 1, 2025

A Vision and a Snapshot!

The Phrenicea website has been online since April 2000 and was coded by hand in native HTML (Hypertext Markup Language), which is the most basic computer language of the web.

It retains in style and function, the look and feel of web pages in the early 2000s. It's designed for computer viewing only, as it preceded the advent of smartphones.

Consequently it's now both a vision of the future, as well as a snapshot of the past!

posted by John Herman 7:39 AM

Sunday September 1, 2024

Evolution is the Future!

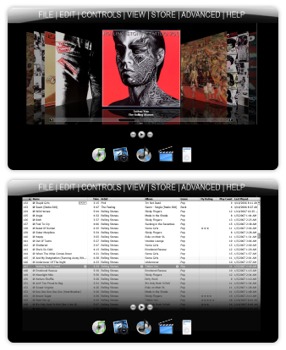

We thought we'd revisit Phrenicea's "Evolution" page here since it's quite rare for a website to be online since the year 2000. We'll look back at Phrenicea's first steps and progress through the years.

*****

"Nothing endures but change." Heraclitus 540 BC – 480 BC

evo•lu•tion

A process of continuous change from a lower, simpler, or worse condition to a higher, more complex, or better state.

(Merriam-Webster)*****Occasionally we like to look back and reflect on Phrenicea's first steps and our progress so far. (What we immodestly

consider the history of the future!)

It's quite rare for a website to be online for more than two decades! Here we'd like to share some of that progress with you. By clicking on the images, you can download the short story that started it all, or view several snapshots of the website as it developed over time.

Allow us to indulge you with the evolution of the Phrenicea scenario!

Click image to download

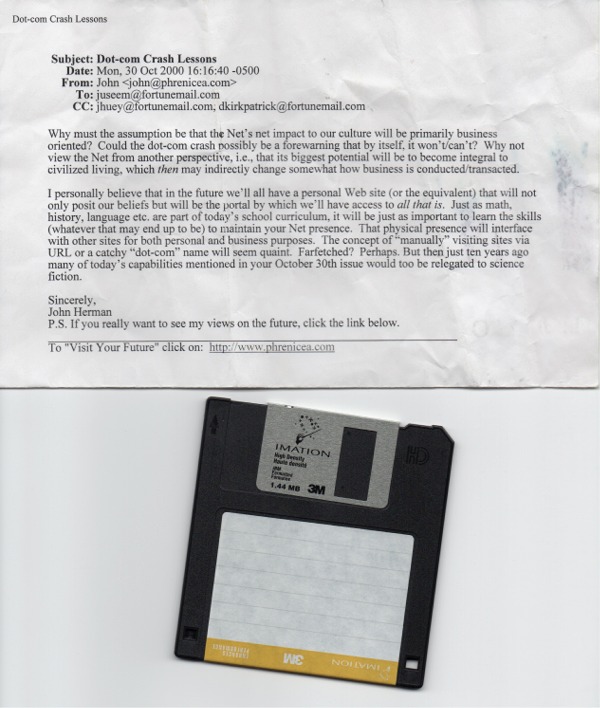

Click image to downloadWay back in May 1999, at the height of the dotcom boom, the short story "The Engagement of Phrenicea" predicted the demise of the Internet and web — and suggested both would be replaced with a central controlling entity called Phrenicea.

That following November, in an attempt to sell the short story online — well in advance of Stephen King(!) — it was uploaded to fatbrain.com, a hyped-up e-publishing website, to be sold as "eMatter." Then in March 2000, at about the same time Fatbrain was re-coined MightyWords, it was decided that a portion of the short story's content would be translated into a website. But should we name the website Phrenicea?

Click image to view

Click image to view

Happy Birthday Phrenicea.com! "Ten [or more!] years is a long time on the Internet" -Time magazine The brand new phrenicea.com website — uploaded April 2000.

All of three pages, it generated fantastic excitement — among ourselves! Imagine that these pages could be viewed by anyone worldwide! It was really a thrill at the time.

The format was a hideous Microsoft FrontPage template plus some elementary HTML code. (Thank goodness we were not yet known to any search engines!)

Click image to view

Click image to viewBy mid-August 2000, the official Phrenicea logo is launched, along with a more readable format. Typically defying conventional wisdom, we adopt the use of frames with orphan pages that are problematic to search engine directories.

Click image to view

Click image to viewIn October 2000, the discovery of how to display linked images by our still-novice webmaster enhanced the visitor experience.

When the dotcom bubble burst and our overblown e-publishing dream vanished, we decided to present the entire scenario on the web and later offered the short story for free.

Click image to view

Click image to viewPhrenicea today. Often misspelled and mispronounced, the site is still a work in progress. The web version of the scenario is now quite beyond the original short story.

Not yet a household word, Phrenicea continues to extend its reach across cyberspace. We like to think — some would say thankfully — that there's no other site quite like it.

Our overarching goal is to continue to be bold, defy convention and branch out into uncharted territory.

*****

Time will tell...

posted by John Herman 10:36 AM

Saturday June 1, 2024

How evolved are you?

You may be SURPRISED how much of your life revolves around FOOD!Early humans spent 98% of their time hunting and gathering for food. Of the 112 average waking hours in a week, how many for you are related to food?

Use the example below as a template to modify and fit to your lifestyle.

Also include time devoted to takeout or restaurant dining.

Here’s a general example:

TOTAL 1

TYPICAL DAY without shopping

(3 meals/day)

Meal planning, .5 hours

Choosing recipe, .5 hour

Preparing food, 1 hour

Cooking food, .5 hour

Eating, 1.5 (.5 hour x 3)

Cleaning up, 1 hour

Total:

3 days x 5 hours = 15 hoursTOTAL 2

TYPICAL DAY with shopping

(3 meals/day)

Meal planning, .5 hours

Preparing shopping list, .5 hour

Travel to shop, .5 hour

Shopping, .5 hour

Travel home, .5 hour

Unloading, putting food away, .5 hour

Choosing recipe, .5 hour

Preparing food, 1 hour

Cooking food, .5 hour

Eating, 1.5 (.5 hour x 3)

Cleaning up, 1 hour

Total:

3 days x 7.5 hours = 22.5 hoursTOTAL 3

Takeout/restaurant day:

1 day = 2.5 hoursGRAND TOTAL

7 days = 40 hoursCalculate % (x) using archived high school brain cells:

40/112 = x/100

cross multiply to get 4000 = 112x

x= 4000/112

x= 36%RESULTS:

More than 1/3 of waking hours devoted to food!Quetions to ponder:

Does a higher percentage, in general, reflect a healthier lifestyle in this day and age, assuming more time is spent preparing fresh food and less time consuming convenient processed meals?How to define evolved? Higher or lower %?

How evolved are you?

Time will tell...

posted by John Herman 9:12 AM

Wednesday May 1, 2024

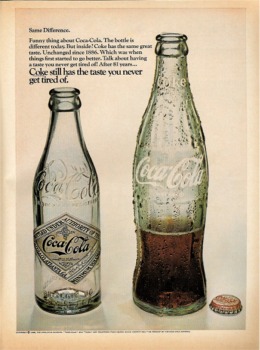

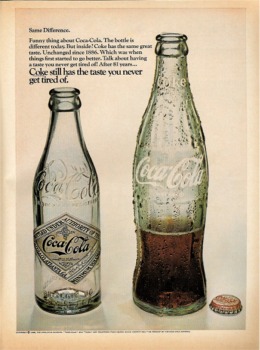

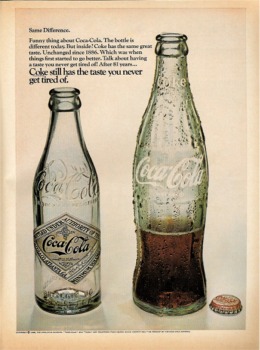

Celebrating Cans!

The Canned Food Information Council declared February of each year to be National Canned Food Month!In the dark cold of February, when few fruits and vegetables grow, canned goods are a convenient and inexpensive dietary staple for many.

The 19th century saw a canning boom. Companies like Campbell Soup, Heinz, and Borden were selling cans of food at lightning speed. Before canning, the four main ways of food preservation were salting, drying, sugaring, and smoking.

If things keep deteriorating globally, we may have to rely more on cans again. Today, canned foods are still incredibly popular. There was a time not that long ago when canned was the only way to get a variety of vegetables and even fish and meat (like SPAM & ham).

The Boomer Generation in the 1950s grew up with the “Ho, Ho, Ho!” of The Jolly Green Giant, and his cans were in almost everyone’s pantry. And did you know Le Sueur Minnesota is the home of The Jolly Green Giant?!

We take for granted today frozen and refrigerated shipping—interstate and internationally.

There’s no comparison to fresh or frozen, but don’t knock canned too much. If things keep deteriorating globally, we may have to rely more on cans again.

Time will tell...

posted by John Herman 7:39 AM

Monday January 1, 2024

Pick Me! Pick Me! Me Me!

The following scenario was originally presented in 2005. Imagine your life's purpose not related to the present, but only to get your brain in shape for near-eternity. Are YOU only living for the future?

*****Ambition is a human characteristic that comes in many forms, varieties, and in varying quantities. Some have little to none — probably due to never being fortunate enough to find their niche in life. Others seem to have unbounded energy to accomplish.

Ambition today is exhibited in such familiar forms as the quest for wealth, the striving for professional perfection, physical prowess, the attainment of fame, the upheaval of the status quo, or the unselfish acquisition of knowledge for its own sake.

By midcentury when Phrenicea becomes an overwhelming controlling entity, personal ambition becomes scarce. An unintended consequence of Phrenicea's societal insinuation was the apparent difficulty in overcoming inherent complacency that accompanied instantaneous access to all the world's knowledge, instinctive physical skills, as well as total comfort and health — albeit within the confining quarters of a cubicle.

For the few determined to resist contentment and sloth, energies were now channeled towards a single goal — that of higher learning and thought for the purpose of increasing the "value" of their brain — to Phrenicea.

Since there would be no limit to the size of the Phrenicea braincomb, nor to the number of brains that comprise

it — whoever could exercise and improve their brains sufficiently in life, to become eligible for Phrenicea selection, would potentially have their brain survive nearly forever within one of a vast conglomeration of hexagonal chambers.

Energies were channeled towards increasing brain "value" — to Phrenicea. However, only brains that qualified with sufficient brainpower to pass a rigorous test regimen would be chosen. Consequently there could be no higher level of status attained than to be designated early in life as a braincomb candidate.

*****Imagine your life's purpose not related at all to the present, but only to prepare your brain for near-eternity in service to others within a Phrenicea braincomb chamber!

All this may sound farfetched or silly — but are YOU too ignoring the present and in some way foolishly living only for the future?

Time will tell...

posted by John Herman 8:17 AM

Friday September 1, 2023

One Smart Cookie...

Help Wanted

FORTUNE COOKIE WRITER:

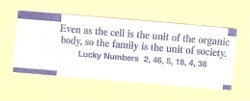

PhD in biology required. Sociology BA helpful. Brevity and a belief in the supernatural a plus. Only smart cookies will become fortune(ate) candidates.The above want ad sounds ridiculous of course. But that is what I envisioned recently after opening a Chinese cookie to find the following fortune:

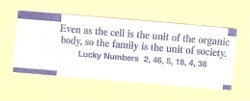

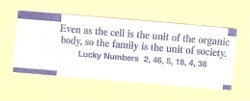

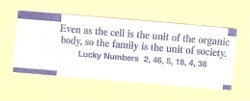

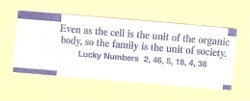

"Even as the cell is the unit of the organic body, so the family is the unit of society." A socio-bio-based aphorism in a fortune cookie? Could this have been created by an underemployed graduate of biology or sociology?

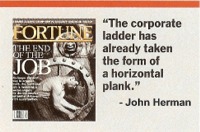

Perhaps this is early evidence bolstering the Phrenicea website scenario of the future that envisions not enough jobs to employ most of the world's population. As higher education becomes the norm globally, college graduates will have to assume lower scale jobs formerly taken by those with high school diplomas or less.

Already, more and more sales associates, telemarketers, customer service reps, bank tellers, bookkeepers, etc. are bringing to the job the benefit of a four-year degree.

But who would have thought about a "fortune cookie writer"?

With the popularity of Chinese take-out, there may be a strong demand for offbeat fortune writers! Fortune cookies are a lot like horoscopes. Our intellectual side tells us it's all

not to be believed. Yet, just as many feel compelled to read what the day may bring for their zodiac sign, opening a fortune cookie for its bit of wisdom or prediction can be irresistible. And if the theme just happens to coincide with a life situation, that's provides reinforcement to look forward to a next time.

As higher education spreads globally, graduates will have to assume lower scale jobs. Ever the skeptic, I saved a few choice fortunes through the years that had some relevance to see if their message would ever be realized. So here goes:

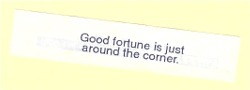

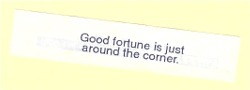

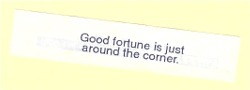

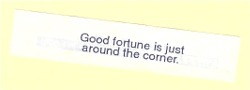

"Good fortune is just around the corner." Unfortunately, I haven't turned this corner yet.

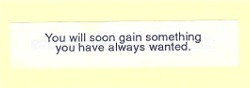

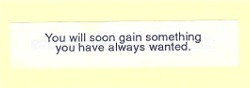

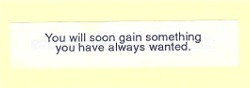

"You will soon gain something you have always wanted." This might have come true;

but it was probably so trivial I didn't realize it.

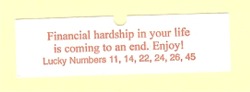

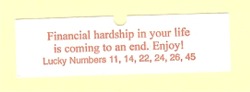

"Financial hardship in your life is coming to an end. Enjoy!" All I know is that I'm still waiting for this end to come.

"Two small jumps are sometimes better than one big leap." I have no idea why I kept this one. Perhaps I'll save it for the Artemis III crew that that is scheduled to set foot on the moon December 2025. Given the reduced gravity, two small jumps might be better than another giant leap for mankind.

Even though it's evident I've not had much luck with fortune cookies, I still can't wait to order Chinese take-out again. Will I crack open another smart cookie to find an abstruse, recondite maxim with a scientific theme? Or will I have the misfortune of reading just another silly platitude? I'm really hoping for the former.

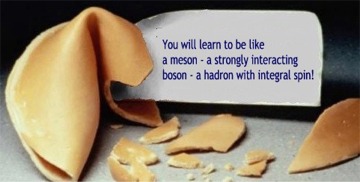

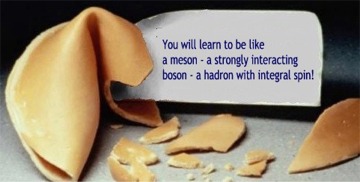

Forever puerile, I'll imagine my next postprandial surprise to be the work of some underemployed subatomic particle physicist, shedding an optimistic photon beam on my two left feet so I can finally show off some dance floor prowess:

"You will learn to be like a meson — a strongly interacting boson — a hadron with integral spin!" I'll certainly save this one. "Dancing with the Stars" look out!  *****

*****Imagine — physics graduates crafting cookie fortunes. An inane exaggeration? Perhaps, but let us hope that's not how the cookie crumbles for many other fields of study the world over.

Time will tell...

posted by John Herman 8:17 AM

Monday May 1, 2023

An "UNscientific" View on Science Education

Having become aware of a Facebook page with the unusual name UNscientific, there’s several posts of interest that are worthy of wider distribution.With the appropriate permission obtained, here is a reprint of a recent post in regard to science education, which is of great interest given January's Two-Cents blog, where this student didn't realize until college the intent of math and science was to understand and explain the natural world.

Was he that dense or are today's students just as clueless? To him they were high school courses to be taken to pass and move up to the next grade. The student depicted—years after catching up to his potential—was accepted into Mensa suggesting the source of failure.

The public educational system needs an entire revamp. The political hysteria in regard to separation of church and state is ironically detrimental to both science and the humanities education.

Our youth are brainwashed to accept evolution and do not see through the fallacious indoctrination. They’re taught to not think big thoughts and reflexively recite the pages of their outdated textbooks.

Young eager minds are being robbed of the wonder of discovery and the excitement of the unknown. That unknown instead is being artificially defined by biased, obstinate promulgators of establishment evolutionary dogma; militant proselytizers of secularism, insecure and intolerant of alternative opinions or scenarios—afraid to lose control of the unprovable random/chance scenario they worship.

Most of the stultified victims will mature while remaining dutiful products of the lamentable inculcation, regurgitating vehemently the Darwinian-based pablum they’ll unfortunately be burdened with for a lifetime.

Only the most intelligent will eventually realize the injustice and hypocrisy of being deprived curiosity and shielded from exposure to real science in accordance with the scientific method.

Let’s finally supplant the entrenched nonsense of passing off biased conjecture and extrapolation as scientific theory with an honest acknowledgement that static, unearthed artifacts—sans interpretation and story telling—are not evidence worthy of science. Instead, they’re fodder for a broad range of inspired scenarios, that can encourage creative thought and incentive to ponder and pursue exploration of imaginative, uncharted paths.

UNscientific. Reprinted with permission. All rights reserved.

Time will tell...

posted by John Herman 7:12 AM

Sunday January 1, 2023

One Student's Epiphany

Many of today's high school and college students often wonder (vociferously!) why they need to memorize boring equations, formulae and other seemingly trivial or useless information.What many times is not emphasized by their teachers is the origin and significance of man-made expressions of what is essentially describing the workings of nature. They're not taught that many of these discoveries required lifetimes of effort — often by iconoclasts, eccentrics, heretics and recluses willing to shed lots of sweat and probably tears in order to solve nature's mysteries.

Still, many students past and present have stumbled upon these truths on their own, often with epiphanic delight.

Below is one finally-getting-serious college student's "Epiphany" written way back in 1971, stripped bare with numerous misspellings illustrating a misspent youth, yet with genuine astonishment that this seemingly simple realization took so long to gel. It was hand written pen to paper and found in a musty old box after four decades. (Today's student might blog such a personal thought — with little chance of rediscovery years hence.)

A message to those who are in the same plight as I: If you question the ways of the sciences — concepts — rediculous [sic] equations — symbols etc and become completely fatalistic toward them — think back a moment to your forefathers who devised these methods.

These are just building blocks to understanding. Just as you need tools to produce a manual task — tools are essential in building knowledge.

Nature does what is does without any influences (until recently however). Man has not and will never harness nature by merely understanding its processies [sic]. This form of study makes use of abstract concepts to make understanding less tedius [sic] and to standardize the methods of expressing our understanding of them possible and eliminate a caotic [sic] consequence.

This must be remembered if excelence [sic] in any science is achieved.

Written by J. Herman circa 1971

Perhaps someday education will go beyond mere memorization and copying teachers by rote to include a real appreciation of our current state of knowledge — knowledge that allows us to not only understand the workings of nature, but to leverage and alter them for our benefit, as well as our peril.

Time will tell...

posted by John Herman 7:16 AM

Thursday December 1, 2022

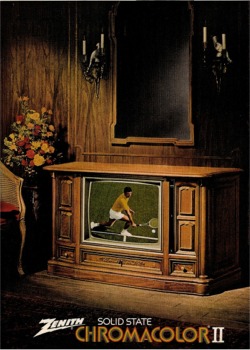

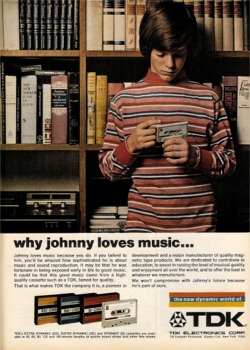

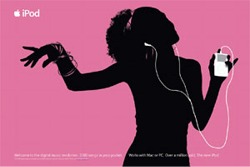

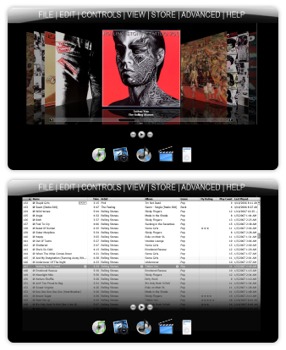

Ahhhhhhhhh!!!

I can't take it anymore!

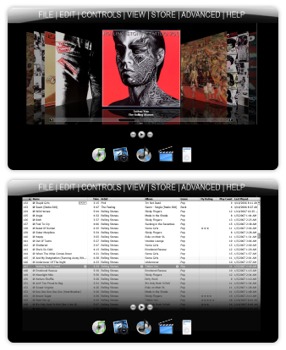

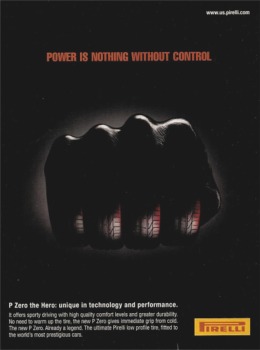

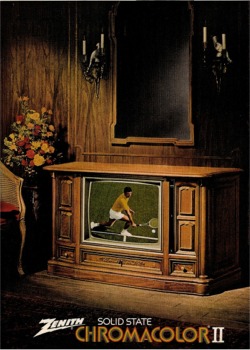

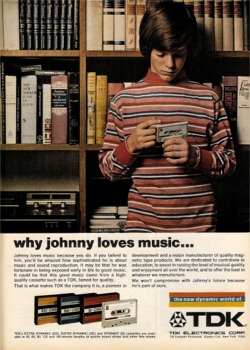

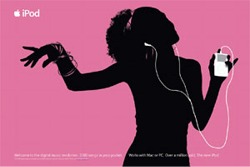

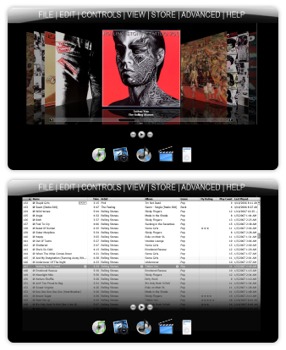

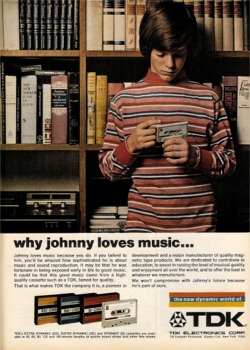

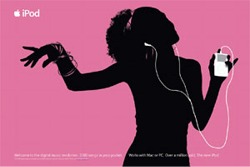

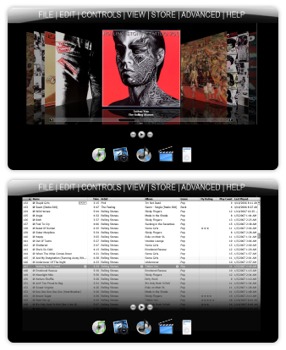

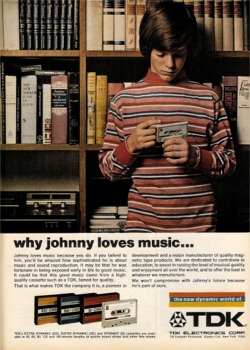

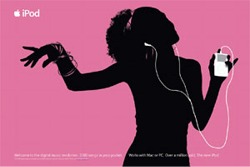

She's overloaded — with too many choices!She's already a victim of that nasty, stress mongering, character icon from the future — "Miss Paradox," who by not wanting you to miss anything causes you to end up missing everything. Technology-enabled leisure becomes a confusing and overwhelming dilemma.

Technology-enabled leisure becomes an overwhelming dilemma. Here's a typical modern day's internal struggle brought upon by today's wealth of technology...

"What should I listen to?!"

"What should I stream?!"

"I can't decide!!!"

Oh, the stress of it all!!!"

"Too much of a good thing!"

"Ahhhhhhhhh!!!"

*****

Striving for affluence? It's a double-edged sword. If this isn't you yet, it could be — if you're seeking inordinate material wealth.

And here's a warning! It is only going to get worse midcentury when Phrenicea becomes part of our lives.

More than just music and video and all the cool hi-tech gadgets which all become obsolete — imagine having every bit of information from the beginning of recorded history instantly available to you upon demand. Just by thinking!

Here might be a typical day's internal struggle midcentury, helped along by that spitish Miss Paradox...

"What do I think about?"

"What do I want to learn?"

"What music should I listen to — or compose?"

"Whose memory would I like to relive?"

"I can't decide!!!"

"Ahhhhhhhhh!!!"

Time will tell...

posted by John Herman 8:39 AM

Tuesday November 1, 2022

Still a Hot Topic!

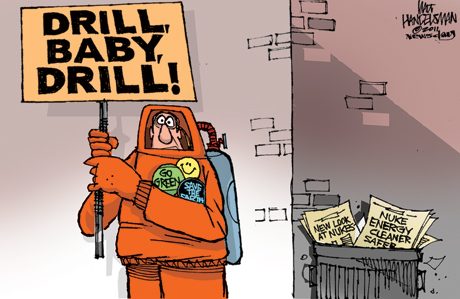

We thought we'd revisit Phrenicea's "Hot Topic" page here for although the terminology has changed from Global Warming to Climate Change, not much else has changed in terms of progress during the past two decades.*****

"We are entering the 'Oh shit' era of global warming." Bill McKibben, environmentalist and author

lobal warming is a hot topic (pun intended!), especially at the Phrenicea website. The worldwide consequences of global warming contribute significantly to the Phrenicea consortium's fictional founding in 2014 (as depicted in the 1999 short story "The Engagement of Phrenicea") and its rapid worldwide acceptance. With Phrenicea, many of the global-warming contributors we take for granted no longer exist by midcentury. There are no: cars, planes, phones, TVs, computers/electronic gadgets, pets, agricultural or meat farms, centralized utilities, oil refineries, et al.

What is global warming?

Simply stated, it's a general trend of increasing surface temperatures throughout the world.

If you live in our modern society, you are contributing to global warming. What is causing it?

Man's (anthropogenic) enhancement of the "greenhouse effect" resulting from industrial activity.What is the greenhouse effect?

The greenhouse effect is the trapping of the sun's heat within the Earth's atmosphere, preventing its escape into outer space. The greenhouse effect is NOT necessarily a bad thing and existed before we humans. Without it, the Earth's temperature would approach 0°F versus today's 57°.Naturally occurring greenhouse gases include water vapor, carbon dioxide, methane and nitrous oxide. Together these act like the glass of a greenhouse; hence the name.

Too much of a good thing...

Modern human activity causes unnatural increases in the naturally occurring greenhouse gases. The consumption of resources — especially coal, oil and gas — is the primary culprit. More of the sun's heat that used to escape through the Earth's atmosphere now remains trapped.The United States alone generates almost 40% of the greenhouse gases. A real concern is the gradual industrialization of the rest of the world. This will exacerbate the effects already observed. It is estimated that carbon dioxide levels alone will double in the next 65 years.

Not me! I drive infrequently and own a small, efficient car!

If you live in our modern society, you are contributing to global warming. If you eat store-bought food, use hot water, watch TV, keep a pet, read the newspaper, switch on a light, use a computer, maintain a grassy lawn, heat or cool your home, wash or dry your clothes, or even walk on carpeting — you're a culprit.I'm Skeptical!

The 16th-century philosopher Francis Bacon wrote that nature best reveals her secrets when tormented. To date she's been exceedingly forgiving. But for how much longer?In just the last 20 years, average temperatures climbed 7°F in Alaska, Siberia, and parts of Canada. The Intergovernmental Panel on Climate Change predicts that global temperatures could rise another 11° by 2100. They've risen only 9° since the last ice age! Marine biologists are now gathering evidence that coral are dying in warmer waters, and thus are predicting that the majestic coral reefs will be gone within 50 years.

So what?

The U.S. Environmental Protection Agency puts it succinctly:

Rising global temperatures are expected to melt polar ice. Warmer temperatures at the poles will change traditional ocean currents, winds and rainfall patterns. Changing regional climate could alter forests, crop yields, and fresh water supplies. It could also threaten human health, and harm birds, fish, and many types of ecosystems. Deserts may expand into existing rangelands. [There is] likely to be an overall trend toward increased precipitation and evaporation, more intense rainstorms, and drier soils.

The potential "domino effect" of massive climatic changes will be displacement of coastal populations, alternating desert and flooding conditions, and infestation by insects — crop-eating and disease-carrying.

There could be "water wars." As U.S. News & World Report® magazine stated in a special edition:

Drought and an accompanying lack of water would be the most obvious consequence of warmer temperatures. By 2015, 3 billion people will be living in areas without enough water. The already water-starved Middle East could become the center of conflicts, even war, over water access. Turkey has already diverted water from the Tigris and Euphrates rivers with dams and irrigation systems, leaving downstream countries like Iraq and Syria complaining about low river levels. By 2050, such downstream nations could be left without enough water for drinking and irrigation.

And there will be indeterminable repercussions.

What about El Niño?

El Niño, the periodic warming of the ocean in the tropical Pacific leading to heavy rains in parts of South America and Africa, is not caused by global warming. It is a phenomenon that pre-dates man's ecological impact. However, they may become more frequent, longer and intense. The effects of El Niño can be viewed as a global warming scenario in miniature.What is the solution?

A voluntary cutback in human activity that creates the byproducts that contribute to the greenhouse effect. The well-intentioned Kyoto Protocol — an international treaty drafted in 1997 and signed by over 150 developed nations — dictated greenhouse emissions be reduced to 7% below 1990 levels by 2012. (The U.S. would have to reduce emissions by 35%!) However, the political distaste of promoting a more frugal lifestyle and higher energy costs to encourage conservation prevented effective implementation of the guidelines.

If every energy spendthrift of modern society performed a one-eighty lifestyle change by adopting a conservation mindset, the synergy of "the power of one" and "strength in numbers" would likely reduce consumption and demand for energy sufficiently to render the global warming argument moot. Our children and grandchildren would be grateful.

— The Editors

*****

Time will tell...

posted by John Herman 9:34 AM

Thursday September 1, 2022

Déjà vu all over again... and again and again!

We thought we'd revisit Phrenicea's "Full Circle" page here since many of us still assume the tremendous change witnessed in the 20th century and first two decades of the 21st, especially the Internet/Web/Social Media phenomenon, will continue throughout the 21st century to further complicate and speed up our lives. It will get worse and will become unbearable. The loss of privacy, proliferation of electronic gadgets as well as technology-enabled violence and environmental factors will give rise to conditions that may lead to our fictional Phrenicea scenario becomming reality.*****

"This is like déjà vu all over again." Yogi Berra

full circle

Function: adverb

Through a series of developments that lead back to the original position or situation — usually used in the phrase come full circle.

(Merriam-Webster)*****

lthough Yogi did not anticipate Phrenicea with his famous quote above, he was humorously astute enough to observe that change is inevitable; and that it can ironically come "full circle," or "back to square one," as it did on a grand scale once Phrenicea became integrated into daily living.

The capabilities of Phrenicea by 2050 in many ways led to less complicated lifestyles, so simple in fact as to resemble 18th and 19th century living, if not before.

Witness:

???? - Reading & writing is yet to evolve.

2050 - Reading & writing is extinct (made moot with Phrenicea).35,000 years

ago - Teeth become symbolic for communicating kinship and status.

Early modern humans sew extracted teeth of their dead ancestors on their clothing to show off a sense of community and distinction among their budding population.

2050 - Teeth become symbolic for communicating kinship and status.

As Phrenicea renders teeth vestigial with the consumption of Polynutriment custard, early adopters show off a sense of community and distinction by extracting and stringing their teeth upon their necks. Edentulous — and proud of it! (Colloquially referred to as "vestige prestige"!)1895 - Radio, TV, VCRs, cell & wired telephone, fax, records, tapes, CDs, PCs and the Web/Internet do not exist (not yet invented).

2050 - Radio, TV, VCRs, cell & wired telephone, fax, records, tapes, CDs, PCs and the Web/Internet no longer exist (not needed with Phrenicea).1860 - Automobiles, planes, jets, powered ships do not exist (not yet invented).

2050 - Automobiles, planes, jets, powered ships no longer exist (made moot with Phrenicea, as well as other factors).200,000 years

ago - One language — the first — exists in the world; Proto-Human language that becomes the common ancestor to all the world's languages.

2050 - One language — the last — exists in the world; tacit, non-verbal communication facilitated by Phrenicea, that becomes the common successor to all the world's languages.1882 - Centralized energy-generating power plants not yet utilized.

2050 - Centralized energy-generating power plants no longer feasible.1450 - No news is good news. Well, not quite. Newspapers — or forerunners thereof — have yet to be invented.

2050 - No news is good news. Well, not really. Newspapers — and derivatives thereof — are moot. There's nothing to report in a world facilitated by Phrenicea.1700 - Food is grown locally and is consumed locally. Long-distance shipping not possible.

2050 - Food is grown locally and is consumed locally. Long-distance shipping economically infeasible.2500 (B.C.!) - A person's financial worth is on their person. Coin, banks and financial institutions were yet to be invented.

2050 - A person's financial worth is on their person (DNA!). Coin, banks and financial institutions not needed with Phrenicea.3,500,000,000 years

ago - the most significant form of life on Earth at the time, arguably the simplest life form, prokaryotes, reproduce asexually.

2050 - the most significant form of life on Earth at the time, arguably the most complex life form, humans, reproduce asexually.1837 - One computer — the first — exists in the world; the mechanical Analytical Engine invented by Charles Babbage.

2050 - One computer — the last — exists in the world; the biological brain-based "braincomb," aka Phrenicea.You are invited to explore the Phrenicea site to better understand the scenario touched upon above. Many of us assume that the tremendous change witnessed in the 20th century, and especially in the 1990s with the Internet/Web phenomenon, will continue throughout the 21st century to further complicate and speed up our lives.

It will get worse, and it will become unbearable. The loss of privacy, proliferation of electronic gadgets as well as technology-enabled violence and environmental factors will give rise to conditions facilitating the infiltration of Phrenicea into our lives and culture.

Our overarching goal is to continue to be bold, defy convention and branch out into uncharted territory.

*****

Time will tell...

posted by John Herman 5:38 AM

Friday July 1, 2022

The T-shirt and the Future!

Summer is the season for T-shirts and we Phreniceanados are donning ours almost daily. The iconic T-shirt purportedly was born in 1913 when the U.S. Navy issued crewnecks for soldiers to wear under their uniforms. And today — unlike most T-shirts with a message, ours suggests something that does not yet exist — a vision of the 21st century!The Phrenicea T's screen-printed logo depicts a fictionalized account of what will occur in the first century of the new millennium. The name Phrenicea is coined from the words phrenic (of the mind) and panacea (cure all). Pronounced fren-EEE-shuh — our shirts instigate grimaces and quizzical, under-the-breath phonetic attempts at pronunciation — including fren-I-kee-ya, fren-i-SEE-ya, and more. Then the inevitable question typically follows, "So what's a Phrenicea?"

Phrenicea is a scenario — an extrapolation based on current trends including advances in biotechnology, the Internet/web phenomenon, and the consequent acceleration of social evolution.

'In many ways life ends up being, both socially and technologically, more like the primitive societies of yore.' Today, many continue to cling to the stereotypical future as depicted in the Jetsons cartoon, where technology reigns supreme, especially in the form of gadgets galore. Phrenicea turns that upside down. Gone are all the gadgets. Even the Internet and web are gone — replaced with Phrenicea. Thus, in many ways life ends up being, both socially

and technologically, more like the primitive societies of yore.

'Phrenicea became the central source of knowledge for the human race, accessible by mere thought.' Phrenicea is (initially) a consortium imagined to be formed in the year 2014. It was the ultimate consequence of the explosion in brain research, led at first by the world's pharmaceutical companies in pursuit of new drugs to ameliorate or cure brain disorders, particularly Alzheimer's and the like — followed by the promise of enormous profits that ensnared tech (search engine companies and chip manufacturers in particular), biotech, nanotech and even entertainment companies; and ultimately just about any entity with enough resources to pursue conquering the final (practically speaking) frontier — the workings of the human brain.

The optimism was contagious, as was the belief that the secrets of the human mind were at last going to be unveiled. But even with the vast financial and technical resources expended, the brain's inner machinations proved too elusive to elucidate.

What was successfully developed however was a working interface with the human brain. This soon was followed by the ability of individuals — triggered by mere thought — to connect and communicate with ("engage") a complex of interlinked brains that were "donated" by gullibles seeking eternal consciousness.

These in vitro brains were kept alive artificially within hexagonal-shaped capsules — each becoming a contributing member (knowledge, experience, perspective, etc.) in a gigantic beehive-like chamber fittingly called the "braincomb."

Surprisingly there wasn't a shortage of volunteers. But who would donate their brains upon death? Baby Boomers of course! As the weight of years shattered their illusion of youth despite their famous moniker, they successfully accepted the reality of mortality with the promise of foreverness.

The confluence of the various technologies synergistically enabled the transmission and storage of massive amounts of data related to human discourse. Just as the early 21st-century Internet made the physical collection of books, music, and other "hard copy" moot — Phrenicea rendered "life's hard copy," the physical and tactile components of daily living, irrelevant.

The capabilities of Phrenicea rapidly became so powerful that there was no longer a need to produce most of the gadgets upon which modern society depended. Suddenly the rage was to "engage," the popular term used to describe a mental hook-up with Phrenicea.

Phrenicea became the central source of knowledge for the human race, accessible not via computer or other device, but by mere thought — indistinguishable from what was once simply "thinking." The popular business management lingo "thinking outside the box," was prescient beyond anyone's wildest dreams!

'Phrenicea rendered the physical and tactile components of daily living irrelevant.' As with most trends, which often reach a peak before entering a period of decline, the distributed nature of the Internet (where information reached its most dispersed form, spanning millions of server computers throughout the world) fostered redundancy, inaccuracy, and unseemly (in the literal sense) waste.

In an about-face, the final phase of the "Information Revolution" entailed the

replacement of the information hodgepodge that grew willy-nilly for decades, with a centralized data entity none other than Phrenicea. Now data validity and wholesomeness could be ensured.

'The final phase of the "Information Revolution" is Phrenicea.' Phrenicea eventually became more important and powerful than government and corporations combined. Those two powerful entities, like the goods or services they had once provided — were now irrelevant.

*****It was never fathomed over a hundred years ago how the T-shirt would become a global force in personal communication, and the one with Phrenicea emblazoned on its front representative of a vision of the future. Now, to share that vision we're giving away a Phrenicea quality T-shirt!

Enter today and you may be a winner!

Time will tell...

posted by John Herman 5:54 AM

Sunday May 1, 2022

Evolution is the Future!

We thought we'd revisit Phrenicea's "Evolution" page here since it's quite rare for a website to be online since the year 2000. We'll look back at Phrenicea's first steps and progress through the years.

*****

"Nothing endures but change." Heraclitus 540 BC – 480 BC

evo•lu•tion

A process of continuous change from a lower, simpler, or worse condition to a higher, more complex, or better state.

(Merriam-Webster)*****Occasionally we like to look back and reflect on Phrenicea's first steps and our progress so far. (What we immodestly

consider the history of the future!)

It's quite rare for a website to be online for more than two decades! Here we'd like to share some of that progress with you. By clicking on the images, you can download the short story that started it all, or view several snapshots of the website as it developed over time.

Allow us to indulge you with the evolution of the Phrenicea scenario!

Click image to download

Click image to downloadWay back in May 1999, at the height of the dotcom boom, the short story "The Engagement of Phrenicea" predicted the demise of the Internet and web — and suggested both would be replaced with a central controlling entity called Phrenicea.

That following November, in an attempt to sell the short story online — well in advance of Stephen King(!) — it was uploaded to fatbrain.com, a hyped-up e-publishing website, to be sold as "eMatter." Then in March 2000, at about the same time Fatbrain was re-coined MightyWords, it was decided that a portion of the short story's content would be translated into a website. But should we name the website Phrenicea?

Click image to view

Click image to view

Happy Birthday Phrenicea.com! "Ten [or more!] years is a long time on the Internet" -Time magazine The brand new phrenicea.com website — uploaded April 2000.

All of three pages, it generated fantastic excitement — among ourselves! Imagine that these pages could be viewed by anyone worldwide! It was really a thrill at the time.

The format was a hideous Microsoft FrontPage template plus some elementary HTML code. (Thank goodness we were not yet known to any search engines!)

Click image to view

Click image to viewBy mid-August 2000, the official Phrenicea logo is launched, along with a more readable format. Typically defying conventional wisdom, we adopt the use of frames with orphan pages that are problematic to search engine directories.

Click image to view

Click image to viewIn October 2000, the discovery of how to display linked images by our still-novice webmaster enhanced the visitor experience.

When the dotcom bubble burst and our overblown e-publishing dream vanished, we decided to present the entire scenario on the web and later offered the short story for free.

Click image to view

Click image to viewPhrenicea today. Often misspelled and mispronounced, the site is still a work in progress. The web version of the scenario is now quite beyond the original short story.

Not yet a household word, Phrenicea continues to extend its reach across cyberspace. We like to think — some would say thankfully — that there's no other site quite like it.

Our overarching goal is to continue to be bold, defy convention and branch out into uncharted territory.

*****

Time will tell...

posted by John Herman 7:49 AM

Tuesday February 1, 2022

The End of Car Collecting?

Overview:

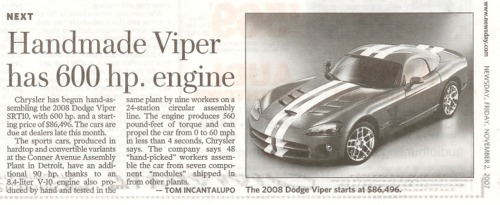

What began as a humble hobby arguably during the second half of the last century, the collectible hobby for automobiles today is Big Business with million$ passing between buyers and sellers. Internet car auction sites spurred the hobby on with easier access via online searching and technologically advanced payment systems. Auction houses like Gooding & Company, Barrett-Jackson and RM Sotheby's give participants access to thousands of sought after cars.We at Phrenicea like to look beyond the present and forsee this as a phenomenon that will soon be in decline.

It became apparant recently that the collectible hobby for cars will likely go the way of stamps and coins. Due in part to today’s youth lacking interest in anything not related to a phone app, but more so because too many functions have been relegated to electronics.A heretofore very reliable 2005 Acura TL suddenly couldn’t accelerate over 2000 rpm. Engine lights and codes

pointed to a faulty APPS, accelerator pedal position sensor.

There'll be no nostalgic-driven memories to literally and figuratively restore. A YouTube search revealed that although the accelerator pedal is connected to a cable, it ultimately is not to any mechanical linkage. The cable is instead connected to a sealed plastic module — with the cable going in and an electrical wire with a connector going out! Essentially an irreparable black box containing a silicon chip. The part was fortunately still available and the swap was made.

Modern cars dependent on electronic rather than mechanical components will never make it to collectible

status, for once their irreparable electronic parts are gone from inventories, they’ll be rendered useless.

Perhaps today’s old cars will be preserved by a cadre of grease-loving historians. Worse, as cars go totally electric for a generation that barely knows a wrench from a screwdriver, the car will be nothing but an enigmatic appliance. Without the emotional connections established between man/woman and machine during the formative years, there will be no nostalgic-driven memories to literally and figuratively restore.

To conclude on an optimistic note, perhaps today's old cars will become tomorrow's even older cars, preserved by a cadre of grease-laden auto historians smearing dog-eared pages of car manuals from decades past.

Time will tell...

posted by John Herman 6:32 AM

Wednesday December 1, 2021

The End of Money?

There's much talk recently about the "end of money" as we know it. Bitcoin and others are vying for our virtual wallets and pockets.Not surprisingly, this website in 2000 proclaimed that there would not be paper or coin money — or even financial institutions — with Phrenicea. Financial transactions would be accomplished via the engagement of Phrenicea, and recorded forever on a man-made 24th-chromosome pair, colloquially referred to as "brainerama." The world's coin, currency as well as electronic funds are replaced with what's commonly called "soma-cash" or "tissue-issue."

Almost everyone's financial assets and liabilities became part of his physical body — accessible to Phrenicea via

engagement to effect transactions for the transfer "DiNAs," the DNA-based unit of wealth.

Almost everyone's financial assets and liabilities became part of his physical body. Self worth became more than a subjective appraisal! And of course there were humorous references to what was, in effect, a new interpretation of chattel, such as "heart of gold," "tender loin," and "your ass-ets"!

So how does one now acquire wealth and a positive sense of self-worth?

With Phrenicea, rank or position is based on biology — not commercial capitalism, the accumulation of material things or subjective criteria.

The value of a person's brain — its potential utility to Phrenicea — determines his/her status. This value is assayed daily by Phrenicea, so in the event of sudden death it (the brain) can be quickly donated (more like claimed!) and integrated effectively into one of Phrenicea's braincomb chambers. The daily remuneration process — based on future brain utility — is by way of DiNAs applied to brainerama.

At the top of this new social order are natural philomaths (lovers of learning). They are the fortunate ones, and a rare breed indeed! By exercising their brains — proactively engaging and interfacing with Phrenicea — they take in factual knowledge that Phrenicea so easily provides and use it to solve intellectual conundrums, reason, or for the creation of new ideas. These are the cerebral capabilities that one day will provide value to Phrenicea;

the functions that no computer was ever made to perform successfully.

With Phrenicea, wealth is based on biology, not the accumulation of material things. On par with the philomaths are those contracted to be genetic progenitors or GPs. Blessed with valuable inherited genomes (in terms of physical features, disease resistance, or whatever subjective criterion a g-twin initiator finds valuable enough to shell out their hard-earned soma-cash for) they are highly compensated to clone themselves.

Finally, the somewhat rebellious would-be philomaths that equate sedentary study with sloth — those also "good with their hands" for manual dexterous work — are in tremendous demand. They are chiropractors and the highly respected working class that maintains the world's physical infrastructure. In full circle, "alternative modalities" and "blue collar" now command considerable respect — and tissue-issue!

How big is your brainerama?

Time will tell...

posted by John Herman 8:21 AM

Saturday May 1, 2021

Phrenicea — The ultimate goal of evolution?

Given current world tensions that hark back to pre-WWII simmerings that led to widespread death and destruction — we'll visit a Phrenicea page uploaded way back in 2000 depicting a scenario that might finally put an end to history repeating itself.*****There's no crime with Phrenicea!Artificial genes collectively called "brainerama" manipulate gene expression and produce hormonal parameters and biological switches — in simplistic terms — that could be set or reset by Phrenicea to control or monitor an individual's behavior and actions "officially" defined as antisocial, deleterious, criminal, etc.

All forms of behavior and thinking were pigeonholed into just two categories: "right" and "wrong." This compilation of binaries became known as the "Black and White Dictum,"

and was the root of Phrenicea's judgmental construct.

Technology would empower the individual with the potential to destroy on a scale formerly limited to superpower nations. Initially, there was a small concern that George Orwell's Big Brother had finally arrived. But the reality was that Phrenicea's controlling nature was welcomed with open arms (and minds!).

Early in the 21st century, it became evident that technology would empower the individual with the potential to change or destroy on a scale formerly limited to mighty superpower nations. Distributed knowledge and capability threatened the very existence of civilization and humankind. It was déjà vu for those who could remember the Cold War fears of worldwide obliteration. Only this time the source of fear could be counted in the billions, and not on one hand.

A few sci-fi fanatics among the early proponents of Phrenicea, members of the aging population of baby-boomers hoping to preserve their memories forever within the Phrenicea braincomb, melodramatically recalled the epilogue by the "Control Voice" from an early TV episode of The Outer Limits way back in 1963:

"May we not still hope to discover a method by which within one generation the whole human race could be rendered intelligent, beyond hatred or revenge, or the desire for power? Is that not — after all — the ultimate goal of evolution?"The boomers' last gasp of activism fueled further worldwide concerns about safety and security to finally — after many decades after that "Golden Age of TV" — discover a method to preponderate the whole human race.

"Implemented" just in time, Phrenicea was given the ability to simultaneously monitor every person's thinking and behavior constantly.

That is not to say that freethinking was abolished. Those generally recognized as "law-abiding and normal," whose moral or intellectual beliefs precluded what is considered anti-social or criminal, had little to be concerned about. Phrenicea could on occasion intervene to bolster behavioral weaknesses to prevent one from actually acting out thoughts deemed unacceptable or dangerous to oneself or society.

All forms of behavior and thinking were pigeonholed into just two categories: "right" and "wrong." On the other hand, individuals not irenically inclined — with mostly violent or destructive thoughts — were subjected to constant behavioral manipulation for the sake of everyone's safety.

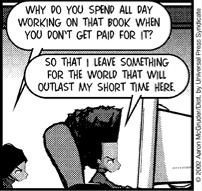

NON SEQUITUR ©2004 Wiley Miller. Dist. By UNIVERSAL PRESS SYNDICATE. Reprinted with permission. All rights reserved.

So, to paraphrase the The Outer Limits' Control Voice in the here and now:

"Is Phrenicea not — after all — the ultimate goal of evolution?"

Time will tell (for us all)...

posted by John Herman 6:22 AM

Monday February 1, 2021

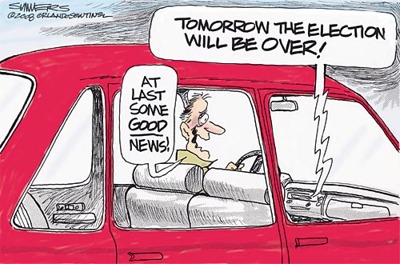

Democrat or Republican ?

Dana Summers © 2008 Tribune Media Services, Inc. All rights reserved. The following Phrenicea web page was first published shortly after the controversial presidential election in 2000. That was when we learned about "hanging chad," the bit of paper that was not quite punched out of what was then commonplace computer punch-card ballots.

With the steady pace of technology in the ensuing two decades, punch cards are history and a good portion of the futuristic Phrenicea scenario is not quite as farfetched as it once seemed.

Unfortunately for us now however, the envisioned scenario for voting as described below is just as farfetched as in 2000. Too bad, since election rigging or cheating would be impossible to claim as affecting the results.

*****

"I can't wait until it [the election] is over." Scott Rasmussen, pollster and founder of Rasmussen Reports

Democrat or Republican ?

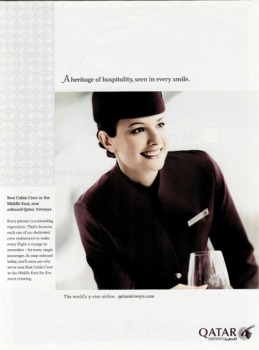

The interminable, down and dirty, mind-numbing campaigning by both major parties for the 2020 U.S. presidential election offered a great opportunity to discuss the mid-21st-century capabilities of Phrenicea.

With Phrenicea every eligible voter has complete access to the entire unbiased history of each candidate — objective factual and biographical information resident within the Phrenicea braincomb —

to decide which candidate is the best choice.

Rhetoric can only be persuasive with the ill informed. Phrenicea ensures that no voter is uninformed! And a vote based even partly on subjective criteria is summarily rejected.

When ready to have their decision tallied, voters merely engage Phrenicea to mentally cast their "ballot." That's all there is to it. With Phrenicea there are no mechanical or electronic devices for casting or recording votes. Voter identity and confidentiality are guaranteed as is the number of votes they can submit — just one!

Unbelievable? Continue reading...

Because Phrenicea continually monitors every individual's behavior, thought process and physical whereabouts on the planet, it can easily be the recipient of voting preferences.

Phrenicea associates the thought transmission of a person with their unique DNA "fingerprint" on its database. A person's genome (particularly their "snips"RealityCheck! or SNPs) is also a component of their transmission between Phrenicea, ensuring security and protection from unauthorized engagements.

Incredible too, from the perspective of today, is that every Phrenicea session is permanently recorded and can be recalled by the engager.

Phrenicea can also be the "engager." The engage sensation, when a transmission is received, can only be described as similar to a spontaneous thought popping into the mind.

Every voter will have access to the entire unbiased history of each candidate. Confirmation of a transmitted vote is received in this manner!

To sustain a typical Phrenicea session, a certain mental discipline has to be learned to avoid being overwhelmed with sensory input or linking incoherently to disjointed information. When Phrenicea was first put into use, many people could not adapt to it. And many were not allowed to! For the children growing up with it from infancy however, it’s second nature.

So how would you learn about your candidate? Here's a generic explanation as described in the original Phrenicea charter drafted in 2014:

Conscious thought is enough to engage a link-up and begin a session with Phrenicea. For example, to compute the sum of a string of numbers or obtain the date and time, the associated thought triggers a session, if only for a fleeting moment. The information is returned instantaneously as if it was conjured up knowledge of the mind. Answers to any academic question can be provided similarly. For topics involving subjective thought or interpretation, Phrenicea provides multiple sources of information allowing the engager to analyze, evaluate and form an opinion — often with epiphanic delight.

Now you can ponder whether you will miss the election-season spectacle of verbal fisticuffs, since rhetoric can only be persuasive with the ill informed.

© 2008 by King Features Syndicate, Inc. World rights reserved. Did you know? Psephology — pronounced see-FAH-luh-jee — is the scientific study of elections!

*****Will free and fair elections in terms of both perception and reality be realized before the Phrenicea scenerio comes to fruition?

Time will tell...

posted by John Herman 7:22 AM

Wednesday July 1, 2020

Pick Me! Pick Me! Me Me!

The following scenario was originally presented in 2005. Imagine your life's purpose not related to the present, but only to get your brain in shape for near-eternity. Are YOU only living for the future?

*****Ambition is a human characteristic that comes in many forms, varieties, and in varying quantities. Some have little to none — probably due to never being fortunate enough to find their niche in life. Others seem to have unbounded energy to accomplish.

Ambition today is exhibited in such familiar forms as the quest for wealth, the striving for professional perfection, physical prowess, the attainment of fame, the upheaval of the status quo, or the unselfish acquisition of knowledge for its own sake.

By midcentury when Phrenicea becomes an overwhelming controlling entity, personal ambition becomes scarce. An unintended consequence of Phrenicea's societal insinuation was the apparent difficulty in overcoming inherent complacency that accompanied instantaneous access to all the world's knowledge, instinctive physical skills, as well as total comfort and health — albeit within the confining quarters of a cubicle.

For the few determined to resist contentment and sloth, energies were now channeled towards a single goal — that of higher learning and thought for the purpose of increasing the "value" of their brain — to Phrenicea.

Since there would be no limit to the size of the Phrenicea braincomb, nor to the number of brains that comprise

it — whoever could exercise and improve their brains sufficiently in life, to become eligible for Phrenicea selection, would potentially have their brain survive nearly forever within one of a vast conglomeration of hexagonal chambers.

Energies were channeled towards increasing brain "value" — to Phrenicea. However, only brains that qualified with sufficient brainpower to pass a rigorous test regimen would be chosen. Consequently there could be no higher level of status attained than to be designated early in life as a braincomb candidate.

*****Imagine your life's purpose not related at all to the present, but only to prepare your brain for near-eternity in service to others within a Phrenicea braincomb chamber!

All this may sound farfetched or silly — but are YOU too ignoring the present and in some way foolishly living only for the future?

Time will tell...

posted by John Herman 10:21 AM

Monday June 1, 2020

Don't touch me, I'm sterile!

Given the recent day and night coverage of COVID-19, we thought we'd revisit Phrenicea's "Sterile" page first uploaded in January 2003, recalling the famous "don't touch me" quote first uttered by TV's Ed Norton in a 1955 episode of The Honeymooners.What was back then a humorous line of TV dialog is today a clarion call for self-preservation.

*****

"Don't touch me, I'm sterile!" Ed Norton, from TV's Honeymooners

Don't touch me, I'm sterile!

Ed Norton (played by the talented Art Carney) from the 1950's TV show the Honeymooners never fathomed that his numskull one-liner would, a century later, become a daily caution for the entire world population! Imagine:

Returning home after being out and about and not being able to enter your main living quarters until you washed and changed from your "outside" clothes.Imagine:

Being admonished to "wash your hands!" at every turn.Imagine:

Never wearing your "outside" shoes indoors.Imagine:

Not feeling comfortable physically touching or even being in proximity to others.Imagine:

Being forever conscious of being contaminated with disease-causing germs.Imagine:

Being leery of touching anything.Imagine:

Resigning to just stay home to avoid worry and risk of contagion.Imagine:

This is only the beginning...*****

At about the same time that Phrenicea came into being, drastic behavioral modifications were adopted to avoid contracting virulent infectious diseases. After decades of the world's population commingling as the result of technology making the world smaller, super-precautionary lifestyles were the norm as germs became more resistant to the efforts to eliminate them.

Those brave enough to be peripatetic had to be ever conscious of potential sources of contamination such as doorknobs, handles, handrails, seats and other objects of common contact. (Water fountains, velour seats and buffet servers become hot collectibles!) Even more challenging was trying to avoid inhaling airborne skin detritus and mucous droplets. This fear of contamination was a significant contributing factor leading to an almost universal acceptance of cubicle dwelling by midcentury.

Venturing out ITF or "in the flesh" was a rare event accompanied by bizarre behavioral adaptations such as:

In addition, clothing worn outdoors would never again be worn indoors and was stored separately from the "inside" wardrobe. When soiled, it was discarded rather than laundered.

- Incessant use of alcohol-based antibacterial sanitizers

- Rubbing elbows as a greeting gesture

- Donning surgical masks, caps and even gloves

Making matters worse, mass disinfections became a common occurrence, not unlike the highly publicized procedure performed in 2002 on the cruise ship Amsterdam as it sat idle for a thorough disinfecting to combat the Norwalk virus. Every pillow and mattress was replaced, gift shop souvenirs were destroyed and literally every surface of the ship's interior and deck was awash with a chlorine solution.

So enjoy the buffets, soft velour seating and other aspects of today's life that we take for granted — and try not to be conscious of what may be lurking on whatever you come in contact with in the public domain!

*****

This page was originally posted in January 2003. Perhaps now more of the other crazy Phrenicea predictions should be taken more seriously.

Time will tell...

posted by John Herman 8:22 AM

Sunday March 1, 2020

Congratulations Motor Trend Magazine !

Congratulations is extended to Motor Trend magazine for the 70 years of outstanding coverage of the past, present and future of the automobile. And Thank you Motor Trend for selecting our letter expressing appreciation for having personal influence as well as industry-wide impact for both the publishing and auto industries.

posted by John Herman 9:02 AM

Wednesday January 1, 2020

The Dumbing Down of Scientific American

I often wonder how magazine cover stories are chosen. Yours of August 2015, "How We Conquered the Planet," would be more appropriate on the cover of a potential sister publication UnScientific American. How did the associated article peppered with “I think,” “I propose,” “I surmise,” “We think,” “But perhaps,” “I suspect,” “We do not yet know,” “I am not totally convinced,” even make it into your magazine? What a colossal waste of eight pages on drivel!

With all due respect to Professor Curtis W. Marean, the premise of the article is bogus — there is no evidence that the study of H. sapiens’ colonization to date has misled scientists. Proposing too

that there is a need to homogenize various theories into a single explanation is contrived, perhaps for self-aggrandizement. The span of time involved and the magnitude of H. sapiens’ worldwide colonization almost presupposes that there would be several scenarios for the astonishing success of the geographic penetration.

Taking existing unearthed evidence and weaving it into a fantasy scenario, supported with the specious claim that we evolved a genetic proclivity for cooperation with unrelated individuals, is patently

absurd. Worse, the attempt to lend academic credence to the fabrication with the coining of new terminology (hyperprosocial behavior) is embarrassingly self-serving of the author and insulting to the reader.

Marean self-righteously closes with a moralistic homily urging us to rise above the ascribed lethal tendencies that are a figment of his conceit Worse still, Marean approaches the border of novelistic fiction claiming that, “A spectacular new kind of creature was born,” as a result of his manufactured “hyperprosocial” behavioral characteristic yielding an “indomitable predator” felling all prey in its path. This makes for good drama but not objective science.

Finally, confident that we’re convinced by the last paragraph, Marean claims as fact that “Science has revealed the stimuli that trigger our hardwired proclivities to classify people as ‘other’ and treat them horrifically.” Oh really? Where are these hard-wiring genes controlling this psychology and behavior? This preposterous hypothesis is unprovable. Adding insult to injury, he then self-righteously closes with a moralistic homily urging us all to rise above the ascribed lethal tendencies that are a figment of his conceit.

Scientific American, please: “Never Again.”

posted by John Herman 5:34 AM

Saturday June 1, 2019

A Better Green New Deal ?

Overview:

We at Phrenicea have a practical and creative proposal that could be a feasible addition to the Green New Deal — where we can get in shape, improve our health and maybe even get paid for it!

With what's now being called Extreme Weather is increasingly prevalent in the news — hurricanes, flooding, and tornado catastrophes — and is often attributed to Climate Change that is in turn often blamed on human activity. But many are skeptical, as it's a complex issue whose cause and effect cannot be observed definitively.The naysayers point out that Climate Change has been going on for eons before we humans had any impact. There is even a bona fied theory that the rapid changes in climate in prehistoric times caused humans to become smarter, forcing the

brain to evolve to greater complexity and intelligence. The cynical among us might then proclaim, "OK then, Climate Change is a good thing!"

We're the only species that expends energy on repetitive motion that is totally non-productive. To our rescue from Climate Change nevertheless is the Green New Deal — an economic stimulus package proposed by a politically left-leaning contingent that has been chastised by the right-leaners for being naive and impractical, if not downright silly. A glaring example is the proposal to eliminate all cows due to the heat-trapping methane gas they emit (flatulence). With this line of thinking, a more logical solution would be to consider banning all pets, as suggested in the Phrenicea scenario of the future — as they are over-populating, polluting, energy intensive and serve no economic purpose — unless perhaps they'd be consumed after serving as a pet.

Outrageous? Well yes, but these are desperate times.

Given that the typical left-leaning, secular World View is that we humans are not superior beings, but are just another

animal on a branch of the evolutionary tree, logically we should be the primary target for elimination. By sheer numbers alone we are the worst polluting entity on the planet.

Too many go nowhere on contraptions for stationary biking, running, climbing and rowing. We all contribute to Climate Change directly and indirectly just by existing with our way of life. So in good conscious it's misguided to single out helpless animals.

For example, we're guilty of:

- driving in cars, gas or electric

- pollution by trucks, by consuming the goods that are transported by them

- emitting flatulence, perhaps more than the helpless cows

- using ever more electricity

- heating and cooling our homes

- drinking bottled water, requiring energy consumption throughout the bottle's life cycle

- generating trash that goes out of sight, out of mind

- shopping online utilizing energy on phones, computers and for shipping

- storing and using hot water

- What else will you admit to?

Guilty? Yes, but instead of facetiously suggesting our elimination, a better strategy would be to offset our personal energy consumption with energy production. But how?

*****For decades we’ve engaged in jogging, aerobics, and pumping iron to get in shape. And too many have been going nowhere on expensive contraptions for stationary biking, running, climbing and rowing. When you take the time to think about it, it's absurd that we are the only

species that expends precious energy on repetitive motion that is totally non-productive, other than working up a sweat!

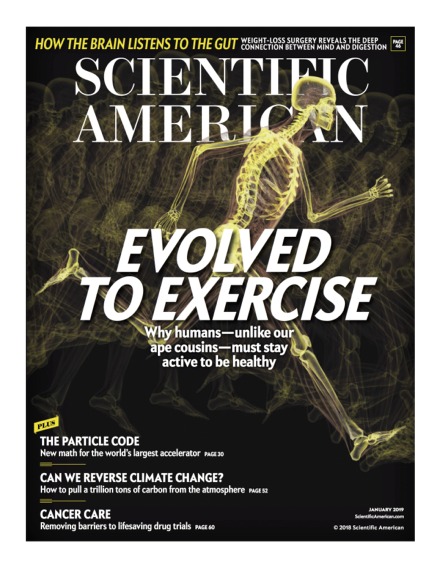

By modifying our exercise equipment we will become power generators! Up to now this exercise might have been considered mostly a selfish "vain gain" activity, and for many in vain. But there's pumping iron-y (!) in the January 2019 edition of Scientific American that presents evidence that exercise for humans is not optional but imperative. New research reveals that our ancestral nomadic existence for hunting and gathering for food is forever wired into our genes, thus the need for physical activity.

With this new authoritative incentive to exercise, we at Phrenicea have a practical and creative suggestion that could be a feasible addition to the Green New Deal — where we can get in shape, improve our health and maybe get paid for it by generating electricity!

We propose that if we are smart enough to know through science that we need to be on the move, we should be smart enough to harness our exercise sessions and wasteful expenditure of calories.

If we connected our muscle building stations, rowers, bikes and elliptical machines to the electrical grid, we could be power generators producing kilowatts — rather than wasting millions of calories perspiring for vain gain!

The Green New Deal should demand (!) we be connected beyond computers and phones! Compared to burgeoning state-of-the-art technology like self-driving cars, hooking up generator-configured exercise machines to the electrical grid is low tech. If we’re going to be the only earthly creatures to engage in such self-torture, let’s not continue to waste potential energy!

A brilliant idea, yes? But a Google search after writing all this revealed that this idea goes back to 2010! Apparently it never took off. So the Green New Deal should now demand (!) we be connected and online beyond computers and phones — to the electrical grid! Thus exercise will not only be beneficial to our health and longevity, but will contribute to our energy reserves and perhaps our bank account.

A better Green New Deal should champion Planet fitness — for the Earth and humanity!

Time will tell...

posted by John Herman 6:27 AM

Monday April 1, 2019

Water On the Brain Syndrome

Now that the lazy, hazy, crazy days of summer are almost upon us, it's a good time to use the sultry weather as an opportunity to revisit yet again our feverish condition of "water on the brain." Way back in 2001 our "H2Ouch!" page began recommending the following:"Pretend you were to pay $1/gallon the next time you take a shower or bath, brush your teeth, flush a toilet, wash the dishes, or God forbid — water the lawn! Begin to use less water than the average person. Set an example. Prevent H2Ouch!"So here we are 18 years later — and we still believe this is good advice, but perhaps the "hyperhydro" proposal was and still is naïve. The problem is that there is little incentive to conserve fresh water from the tap — given its ridiculously low price. For example, I received my "Annual Water Supply Report" from my local water company and was dismayed at how little fresh water costs. Here's the breakdown:

Quarterly Water Rates — Residential

Consumption (gallons) Charges

- Up to 10,000

- 10,001 - 60,000

- 60,001 - 100,000

- Over 100,000

- $15.00 minimum

- $0.95 / thousand gallons

- $1.21 / thousand gallons

- $1.47 / thousand gallons

A dollar for 1000 gallons of clean, fresh tap water? That's insane! By comparison, bottled water by the gallon costs about $1.99. Not bad, but that's $1990 for 1000 gallons. Why is there such a cost disparity with tap water? How can anyone be motivated to conserve water at these low rates,

other than via a guilty conscious? And let's face it; there aren't many turning on their taps ladened with guilt. (If the water companies got savvy they'd upmarket their image with exotic brand names, pricing and refillable bottles with fancy labels adding cachet to their product. Imagine having bragging rights to elite-sounding potable water! It's not that silly a suggestion, since that is essentially what Coca-Cola did with Dasani and PepsiCo with Aquafina. They're both filtered municipal tap water.)

Wouldn't it be prudent build "aqua equity" for future generations? Actually what we really need is a "watershed moment"; a trickle-down epiphany to appreciate how finite and precious our water supply is. The first step should be to make users conscious of their water consumption — and that can be accomplished handily by raising the price per gallon and using a more dramatic cost gradient for excessive use. It sounds crazy, but those concerned about conservation should lobby for pricing increases.

Another way to raise awareness might be to move our water meters out from their usual obscure locations into full view in kitchens and bathrooms — fitted with big, red digital read-outs displaying gallons used in real time. Education on where our water comes from and how it's treated,

stored, delivered and renewed would also serve to engender an appreciation of what is the major constituent of all living things.

We should instill among ourselves an idiom-cum-mantra to "spend water like it's money." There's an old saying attributed to spendthrifts that says they "spend money like it's water." Maybe we should instill among ourselves a new idiom-cum-mantra to "spend water like it's money." Since we all tend to waste water and take its abundance for granted — it even unintentionally spills over into the comics:

© 2007 Baby Blues Partnership. Reprinted with Special Permission of King Features Syndicate.

Did Zoe and Hammie's tub really have to be filled to the brim? *****

Wouldn't it be prudent to pay more today to change our wasteful habits, while adopting a mindset focused on conservation to build "aqua equity" for future generations?

Time will tell...

Presented as a "Timely Yet Timeless" post by John Herman 6:19 AM

Monday October 1, 2018

An Open Letter to Scientific American

The Web and social media have been devastating the entire magazine and newspaper industry. In an attempt to retain interest and readers, many have compromised journalistic standards for shock value and have been accused of purveying “fake news.” Scientific American hasn’t stooped that low, but its objectivity is slipping.In the April 2018 issue, Michael Shermer speaks proverbially out of both sides of his mouth in his editorial “Silent No More.” It appears he was struggling to fill up space in his monthly column with his vacillating and hesitancy to proffer an opinion.

Dear Scientific American Editors,

Michael Shermer [April 2018] speaks proverbially out of both sides of his mouth in his editorial “Silent No More.”

He references four polls measuring religious affiliation (2013 Harris Poll, 2015 Pew Poll, 2017 Pew Poll, 2014 Austin Institute Survey), then casts doubt on associated response veracity due to social pressures and stigma.

He then sites two additional Bayesian-adjusted surveys attempting to indirectly render a credible estimate of atheists among the population.

The time has come for a healthy skepticism towards evolutionary dogma. Doubtful of their inference still, he feels the need to qualify his subtitle “The Rise of Atheists” and the estimated number of atheists with “If true...” After the 2016 election’s surprise, he should indeed cover himself before positing an editorial premise and/or conclusion based on polling.

Skepticism aside, he IS onto something with his assessment that we need to ground our morals and values and find meaning in our lives.

Without polls and complicated statistical analysis, it’s readily evident that these need to be addressed.

Crimes involving moral turpitude are increasing and are often attributed to easy gun access, effects of social media, violent video games and more. Little is said about the abandonment of religion and its potential consequences.

Declining church attendance, current divorce rates, and the rise of the LGBTQ community offer evidence beyond polling to conclude that secularism, and perhaps atheism, are on the rise.

School curriculums, news and entertainment media, and publications like Scientific American that matter-of-factly subscribe to Darwinian evolution contribute to secularization.

This is becoming a predominant world view, while the evidence supporting Darwinism through chance and undirected processes is in reality based on empirics and an interpretation of artifacts - an interpretation with many holes. Additionally, the theory’s plausibility is becoming increasingly strained as cellular complexity is elucidated.

Perhaps the time has come for a healthy skepticism towards evolutionary dogma, which may then spark new hypotheses that better lend themselves to our finding meaning and grounding our morals and values.

Sincerely,

John Herman

*****Time will tell...

posted by John Herman 6:42 AM

Saturday September 1, 2018

An Open Letter to Scientific American

In the August 2016 issue of Scientific American, author Annie Murphy Paul discusses the Computer Science for All initiative championing computer science instruction in public schools, specifically the teaching of computer coding. Forthwith is our argument against that initiative.

Dear Scientific American Editors,

The "Coding Revolution" calling for all students to learn computer programming is misguided — as it assumes technology will not (yet again) evolve. There have been computer programmer shortages dating back to the 1970s when the profession was deemed Data Processing. Imagine forcing students then to learn COBOL, CICS and JCL — centralized mainframe languages that took us from purely manual processes to the first phase of automation utilizing punch cards, dumb terminals, voluminous printouts and daily batch transaction updates. By graduation they would have been qualified to merely maintain legacy applications being supplanted by distributed, real-time systems utilizing C++, Java et al.

Time in the classroom is precious. Once critical skills already are not being taught and learned knowledge is being replaced with ad hoc web searches. There are now young adults who cannot tell time via a dial-faced